Globally, deepfake is becoming a major problem. It can be employed to disseminate false information or discredit someone. Its use in elections is greatly feared in such a scenario. In this regard, the administration has begun to make preparations. The government plans to send out a team of deepfake detecting specialists prior to the 2024 Lok Sabha elections, if reports are to be believed.

Also Read: Pakistan Election 2024: Jailed Imran Khan Claims Victory Amidst Vote Counting

Soon, the Home Ministry’s Cyber Wing Department will develop a deepfake detection tool. Sources claim that extensive work is being done to create a deepfake detecting tool by the MHA’s I4C section and the BPRD (Bureau of Police Research and Development).

Information obtained from ministry sources indicates that the police at each and every cyber police station in the nation will receive this detecting technology. This will enable the detection of deepfake videos.

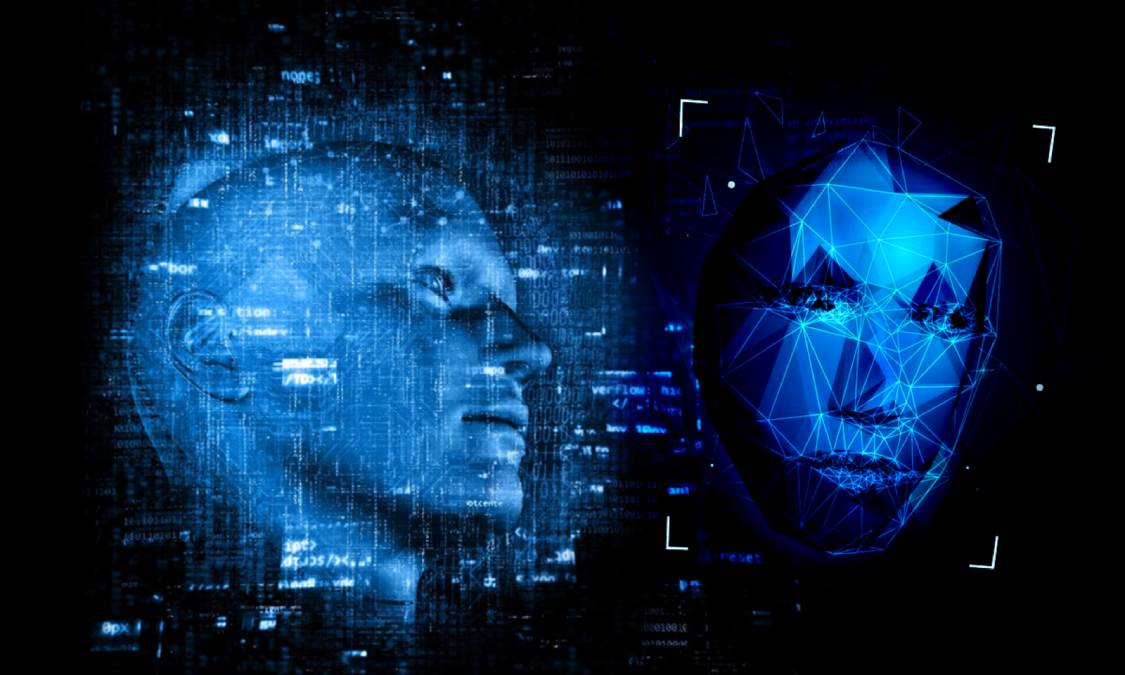

What Is Deepfake?

You may think of Deepfake as an improved kind of photo morphing or video editing. In this kind of video, a person’s public images and videos are used to produce a fake video. These kinds of videos could be used to propagate false information or discredit someone.

Videos like these can be used to incite societal unrest and to target opponents in political campaigns. Sources close to the MHA cyber wing claim that this program will not only identify deepfake videos but also assist in identifying their creators. For the first time, a tool to thwart deepfakes is being developed by the government. This is a significant step in the direction of avoiding technology abuse.

Tips To Avoid This Problem

You will need as many of your images and videos as you can to create a fake video. A deepfake movie can be produced more effectively the more images and videos of the subject are available.

At the very least, you ought to let people see your pictures and videos in such a circumstance. Retain the privacy of your social media images and videos. In addition, before you believe any video, make sure you have verified the information from a reliable source to prevent falling for a deepfake.

Also Read: Haldwani: Schools Shut, 6 Dead, 250 Injured, Curfew Imposed As Uttarakhand Burns In Violence